For continuous intelligence applications, the relationship between prediction and prescription is richer than described in the classic framework of analytics capabilities:

Part 1 of this article discussed three reasons why, when working with streaming data, you really can't prescribe if you don't predict. Part 2 below describes two strategies for integrating predictions and prescriptions.

1. Custom loss function

This strategy works when prescriptions involve a limited number of parameters. The strategy consists in formulating the optimization challenge as a single criterion (the "custom loss function"), and then finding the predicted values of key parameters that minimize that criterion.

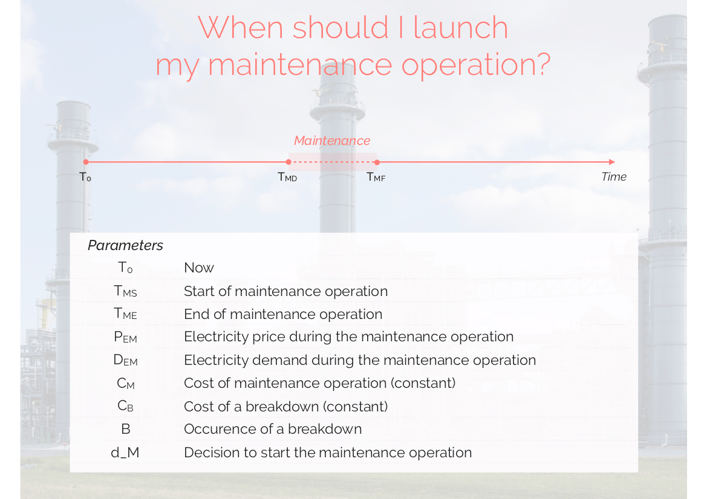

Let's illustrate this with the example of an electricity plant manager wondering when to start a maintenance operation.

Building the custom loss function

Based on these parameters, we can write the following loss function:

Loss = [(1 - d_M) x B x CB] + [d_M x (CM + PEM x DEM)],

for each upcoming time T.

- [(1 - d_M) x B x CB] is the cost of doing nothing (and risking a breakdown).

- [d_M x (CM + PEM x DEM)] is the cost of the maintenance operation (direct cost and lost revenue).

Our goal is to find the time TMS that minimizes the loss function.

Identifying and predicting the random parameters

It is quite easy to spot the random parameters in our loss function:

- We are selling electricity to the market. The market price changes continuously. PEM is random.

- Electricity demand also changes continuously. DEM is random.

- We are not sure when a breakdown could occur. B is random.

We are therefore going to predict PEM, DEM and B for each upcoming time T (making sure we are using proper cross-validation processes, since we are working on streaming data).

Minimizing the custom loss function

Injecting our predictions into the loss function will give us one predicted loss for each upcoming time T.

We will start the maintenance operation at the time TMS that minimizes our loss - continuously updating our predictions, thus our prescriptions.

2. Enhanced operations research

Custom loss functions are convenient for relatively simple prediction x prescription challenges, but quickly become intractable when the number of parameters, business rules and constraints increases.

For example, we have assumed above that our electricity plant manager worried about the timing of a single maintenance operation (presumably for a single industrial asset).

In reality, that manager is probably trying to optimize a maintenance schedule that includes dozens of maintenance operations, and juggling with such additional, "plant-level" constraints as:

- There can't be more than N1 simultaneaous maintenance operations at any given time.

- We can't affect more than N2 engineers to maintenance at any given time.

- Each industrial asset must be maintained at least once every TMAX time steps - TMAX being different for every asset.

Enter operations research...

Enhanced operations research

The strategy we call "enhanced operations research" is conceptually simple, and a continuation of custom loss functions:

- First, we are going to build one loss function (and the corresponding predictions) for each industrial asset.

- Then, we will feed these loss functions, together with our plant-level constraints, to a standard operations research algorithm.

- We will refresh the predictions and the constraint optimization at every time step.

Voilà! We now have a solution that:

- Optimizes at multiple levels

- Updates continuously

- Informs prescriptions with predictions

We could further complexify the challenge in many ways (optimal selection of prediction hyper-parameters, additional layers of optimization, multiple prescription horizons...) without altering the framework.

***

Datapred uses continuous intelligence to help industrial companies buy raw materials and energy. Don't hesitate to contact us for questions on procurement optimization. You can also visit this page for a list of external resources on continuous intelligence.